AI progress and a landscape of problem conditions

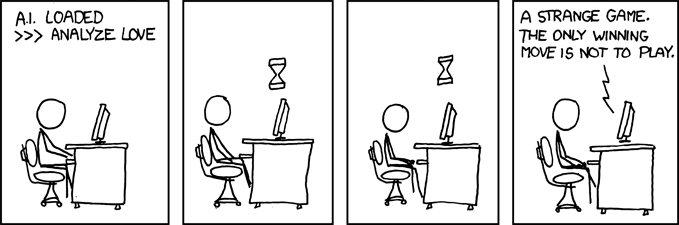

I’ve mentioned this ‘Zone of Hubris’ idea in a couple of earlier posts, and it’s time I made it clear what I mean by this slightly over-blown phrase.

The basic idea is that the sort of AI we are making at the moment is being developed against a range of problems with very clear success metrics, and relatively high levels of available information. Recent rapid progress is giving rise to significant confidence in our ability to begin to address really useful problems with the aid of AI (nothing in this post relates to currently imaginary super-intelligent Artificial General Intelligence).

This is likely to lead us to seek to apply our shiny new successes more ambitiously – as well we should. But we need to be aware that we have been sharpening these tools in a particular arena, and that it is not at all certain that they will work well in different circumstances.

“Well, of course..” you might say; “we’re quite aware of that – that’s exactly how we’ve been proceeding – moving into new problem domains, realising that our existing tools don’t work, and building new ones”. Well yes, but I would suggest that it hasn’t so much been a case of building new tools, as it is has been about refining old ones. As is made clear in some earlier posts, most of the building blocks of today’s AI were formulated decades ago, and on top of that, there appears to have been fairly strong selection for problem spaces that are amenable to game/game-theoretic approaches.

‘Hubris’ is defined as ‘excessive or foolish pride or self-confidence‘. Continue reading “AI and the Zone of Hubris”

Satya Nadella’s call for AI to be collaborative with humanity

Satya Nadella’s call for AI to be collaborative with humanity